A quick walkthrough into LLMs, Large Action Models and Agents

Large Action Models (LAMs) areLLMs specifically trained for action generation or generalist LLMs like GPT-4 prompted for action. Agents leverage those to achieve users’ goals. LaVague is a framework to build AI Web Agents.

Executive summary:

- LLMs are game changers in the industry because they have enabled the generation of high-quality text, which can be code or arguments for function calling, which can be used to perform actions

- Large Action Models (LAMs) is a recent term that refers to LLMs either specifically trained for action generation, or generalist LLMs like GPT4 that are prompted to generate action.

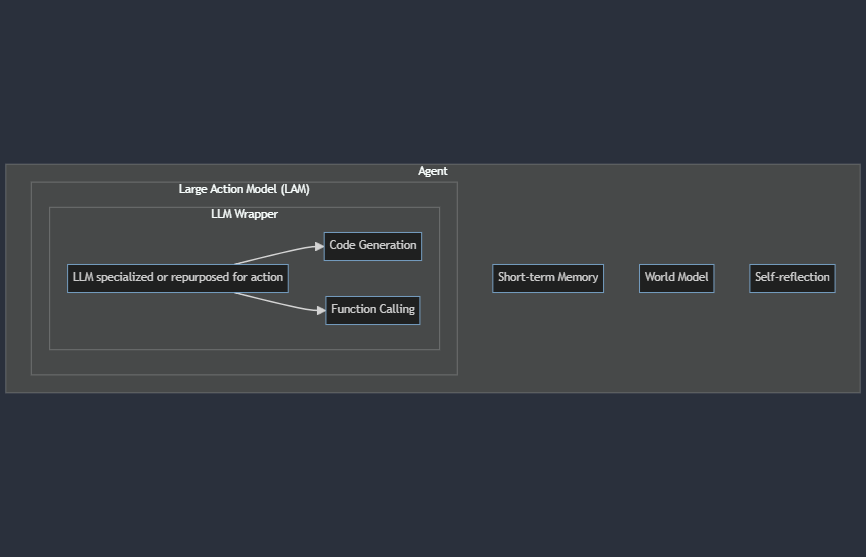

- Agents are generic entities that receive an objective from users and perform the necessary actions to achieve the desired goal. They use LAMs but also components such as short-term memory, world models, or self-reflection to solve a given problem.

- LaVague is a LAM framework to help developers build AI Web Agents. You can find more on our docs, or reach out to us on our enterprise channel for a collaboration.

Intro

Generative AI, especially LLMs (Large Language Models), has seen a huge boost in adoption, as it enables to tackle previously unsolved problems, from code fixing to the summarization of emails, through information retrieval on large corpus of data.

Among their game-changing capabilities, code generation, and function calling are of particular interest, as they can be leveraged to perform actions and navigate environments, from browsing the internet to navigating internal native apps.

Such systems able to interact with complex environments in multiple steps, think about their observations and take the appropriate actions, are called Agents in Reinforcement Learning.

We will see in this article in more detail how Agents, LLMs, and LAMs are interconnected, so that readers can better understand what each of these terms mean to better navigate recent advances.

Overview of LLMs

As a reminder, Large Language Models are recent AI models excelling at modeling the distribution of words given a specific context.

The most successful ones we know today are called autoregressive models, which means that given some input, like “The cat sat on …” they will output the most likely next word, for instance, “sofa”.

Though non-autoregressive models exist and are quite popular, like BERT, we will focus on the autoregressive ones here as they are able to generate arbitrary text given some request.

ChatGPT was one of the first LLMs to reach the capability of providing relevant insights into answering human queries in natural language.

One of the main strengths of LLMS is their ability to answer a wide range of scenarios, as many problems can be seen as taking as input a sequence of tokens and outputting a sequence of tokens.

For instance, we can see it in practice with:

- Code fixing, input = code to be fixed, output = correction

- Email summarization: input = full text, output = summary

- Sending an email automatically with some data: input = data to send, output = API to call to send that data (e.g. Gmail)

The last one is an example of action, which one could define as an operation that can persistently affect the outside world, such as sending an email, versus something purely local, such as doing some in-memory computation that is lost after the agent is destroyed.

LLMs are therefore quite powerful for understanding users’ queries and interact with the world by either generating on-the-fly code to perform the desired action or simply choosing an API it has access to and calling it with the right arguments.

Overview of LAMs

Large Action Models can be seen as LLMs specialized in action generation, which as we mentioned previously, can either be code to be executed or the name of a function and its arguments.

In practice, we mostly see two kinds of LAMs:

- Specialist LLMs trained to be effective at generating action

- Generalist LLMs prompted to be to effective at generating action

Indeed, we see in LLMS two main ways to improve performance:

- Changing the weights (pretraining or fine-tuning)

- Eliciting capabilities by doing prompt engineering

WebAgent is an example of LLM-driven agent specialized for web navigation that contains both a specialist and generalist LLM for action:

- It uses HTML-T5, a specialist model for HTML summarization

- It uses Flan-U-PaLM, a generalist model for Python code generation

It can therefore makes sense to combine both generalist and specialist LLMs to design models or combination of models for action generation.

Overview of Agents

An Agent, in the Reinforcement Learning sense, is an entity that interacts with an environment to achieve a goal. The agent makes decisions and takes actions based on observations or states of the environment, aiming to maximize some notion of cumulative reward over time.

The word Agent has been overly used in the recent months due to the popularity of LLMs. Here we will stick to the more formal definition of Reinforcement Learning, where we assume that agents are entities capable of agency, aka have the following features:

- Autonomy: The ability to make independent decisions without external control. An agent determines its actions based on its own perceptions and internal mechanisms.

- Goal-directed behavior: The pursuit of specific objectives or goals. An agent's actions are aimed at achieving desired outcomes.

- Perception: The capability to sense and interpret information from the environment. This involves receiving and processing observations or states that inform decision-making.

- Action: The execution of behaviors or decisions that affect the environment. An agent takes actions to interact with its surroundings and influence future states.

- Adaptation: The capacity to learn from experience and improve performance over time. An agent adapts its strategy or policy based on feedback, often in the form of rewards or punishments.

Therefore we do not consider simple RAG pipelines over some documents as being agents, and keep that definition for more complex AI systems capable of interacting with an environment where it takes actions to achieve a goal.

Several architectures have been proposed to create agents. One modelling that we particularly like is the one proposed by Yann LeCun's research paper: A Path Towards Autonomous Machine Intelligence.

The paper proposes an architecture for agents that consists of multiple modules, each responsible for different functions such as perception, world modeling, memory, and action generation. These modules work together to enable the AI to perceive its environment, react to it, reason about it, plan actions, and execute them, much like the human brain leverages and combines different cognitive processes.

Uses cases and opportunities of Agents

That looks nice but why should one care about Agents?

Well, there are many problems where a solution would require agency. Many times, the answer to a specific problem requires an entity to forage for information, browse different sources such as a website, curate the information, and then communicate that information, for instance, by sending an email to a colleague.

The underlying capabilities needed for that include features that agents possess: the ability to understand the objective and navigate through websites, which requires understanding the environment (webpages) and taking actions (clicking on elements) and calling tools (sending an email with the output).

Here are a few use cases that Agents can uniquely solve thanks to their ability to make complex decision making and interact with the web:

- Data entry: navigate through complex websites, e.g. taxes, and fill forms

- Web testing: explore a website and perform tests to ensure the website behaves as expected

- Information retrieval: search online pages, e.g. internal or external documentation, to find information where search engines and manual and precise information retrieval are needed

Building Agents with LaVague

We have strived to make it straightforward to build AI Web Agents with LaVague.

Here is a glimpse of our Quick tour from our docs on how to get started:

First, install with pip:

pip install lavagueThen we can create and launch an agent to browse the docs of Hugging Face PEFT easily:

# Install necessary elements

from lavague.drivers.selenium import SeleniumDriver

from lavague.core import ActionEngine, WorldModel

from lavague.core.agents import WebAgent

# Set up our three key components: Driver, Action Engine, World Model

driver = SeleniumDriver(headless=False)

action_engine = ActionEngine(driver)

world_model = WorldModel()

# Create Web Agent

agent = WebAgent(world_model, action_engine)

# Set URL

agent.get("https://huggingface.co/docs")

# Run agent with a specific objective

agent.run("Go on the quicktour of PEFT")Code from our Quick tour

We have an example for each of these use cases that you can find in our docs:

- Data entry: We show how to automatically fill a form using LaVague to apply for jobs

- Web testing: We show how LaVague can be used to transform a Behavior Driven Development (BDD) file that mentions scenarios to test, pilots a browser to execute the scenario, and then exports Selenium code to a Pytest BDD file

- Information retrieval: We show how Lavague can navigate through a Notion to find relevant information.

Conclusion

We have seen through this article the relationship between LLMs, LAMs, and Agents.

LLMs are the revolutionary models that ChatGPT has democratized to generate high-quality text, which then fuels LAMs, which are LLMs specialized in code generation.

Those LAMs are then the engine that powers Agents, which are generic AI systems able to interact with an environment (e.g., the web) to answer users’ objectives. Agents will be able to unlock a lot of value in society, as they can help us perform tasks that are low-value but necessary legacy steps.

We have seen a few examples, from scaling Web QA to automating the use of SaaS tools (CRM, ERP, etc.).

If you are a company and are interested in leveraging Agents to 10x your productivity or are a builder of such a tool, and want to partner with us, don’t hesitate to reach out through our enterprise channel.

If you are a user, builder, researcher, or hobbyist who is passionate about large action models, wants to implement the latest papers, or simply wants to chat, don’t hesitate to join our Discord!